Google Glass launched just a few years in the past seems like regular eyeglasses however it’s manufactured from a particular glass and has a person interface (UI). It really works like a smartphone by which you’ll be able to see time of the day, test information, learn books, and far more; the textual content seems as an overlay or a floating picture within the air. On the similar time, you’ll be able to proceed seeing your environment, identical to you do with an peculiar pair of glasses.

Google Glass launched just a few years in the past seems like regular eyeglasses however it’s manufactured from a particular glass and has a person interface (UI). It really works like a smartphone by which you’ll be able to see time of the day, test information, learn books, and far more; the textual content seems as an overlay or a floating picture within the air. On the similar time, you’ll be able to proceed seeing your environment, identical to you do with an peculiar pair of glasses.

The Google Glass is revolutionary and is more likely to change into one of the vital superior devices sooner or later. Since these sensible glasses are costly, it’s troublesome for most individuals to expertise the know-how. Therefore, we will attempt to design our personal sensible glasses.

0

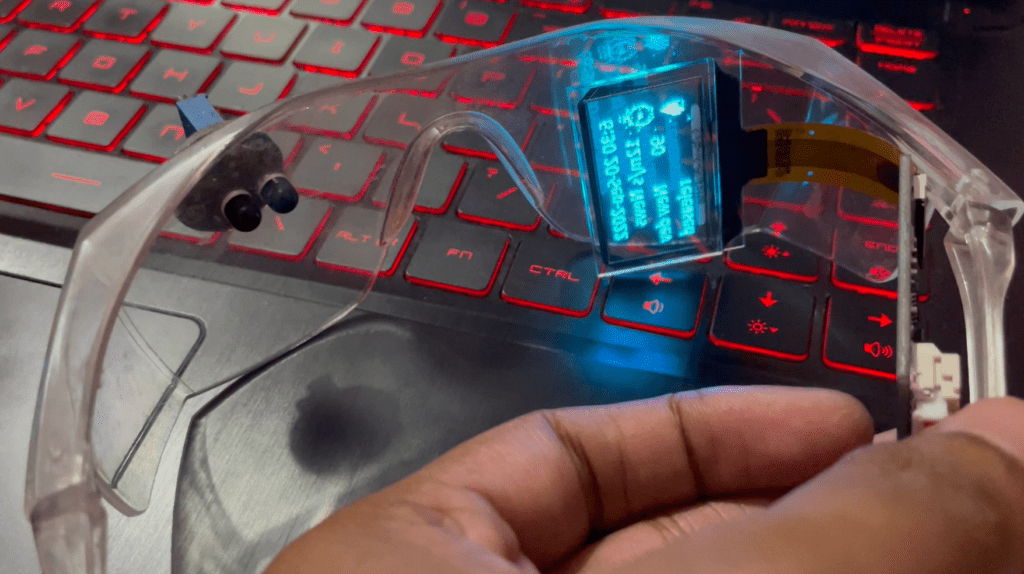

A000 image of the Google Glass is proven in Fig. 1 and the creator’s prototype of sensible glasses is proven in Fig. 2. The elements required for constructing the sensible glasses, that are considerably much like Google Glass, are listed beneath the Invoice of Supplies desk.

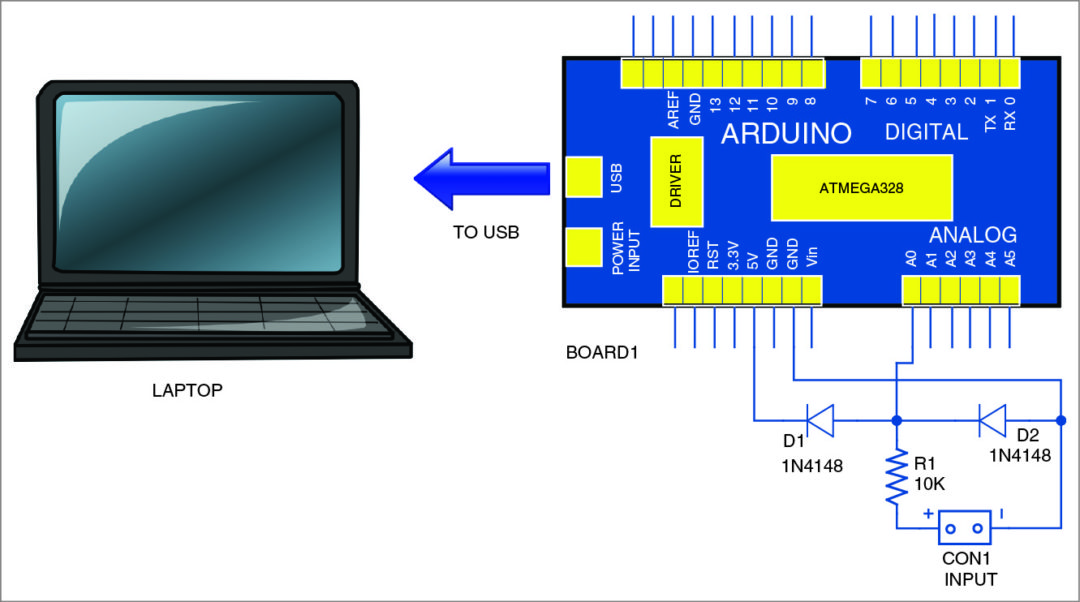

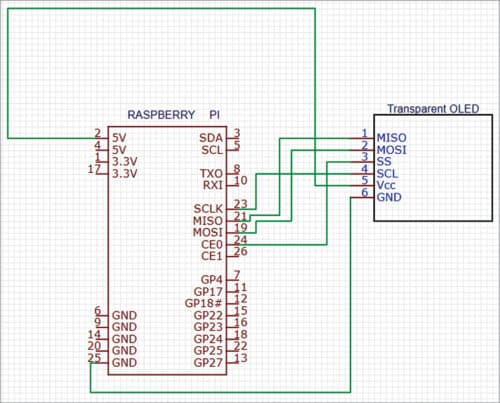

Fig. 3 reveals the circuit diagram of our sensible glasses constructed utilizing Raspberry Pi and a clear OLED show. Exchange a lens or eyeglass in an peculiar pair of glasses with the clear OLED show and join it to Raspberry Pi and the battery.

| Invoice of Supplies | ||

| Part | Amount | Description |

| Raspberry Pi Zero W | 1 | SBC for sensible glasses |

| Clear OLED show | 1 | 2.9cm OLED show |

| Eye glass | 1 | Eyeglass |

Software program

We’d like Python to create a code for interfacing and configuring the OLED show. The newest model of Raspbian OS comes with Python 3 preinstalled. In case you are not utilizing the newest model, set up Python and its IDE first. Subsequent, set up the libraries and modules for interfacing the show, after which the opposite Python modules and libraries as per the features you need on your sensible glasses.

A easy reference design of sensible glasses that show time and textual content info on the eyeglasses is given right here. To put in the Python modules, open the Linux terminal and run the next command:

wget http://www.airspayce.com/

mikem/bcm2835/bcm2835-1.71.tar.gz

tar zxvf bcm2835-1.71.tar.gz

cd bcm2835-1.71/

sudo ./configure && sudo make &&

sudo make test && sudo make

set up

sudo apt-get set up wiringpi

#For Raspberry Pi programs after Might 2019 (sooner than this, you might not want execute), you might must improve:

wget https://project-downloads.drogon.

web/wiringpi-latest.deb

sudo dpkg -i wiringpi-latest.deb

Verify the set up utilizing the next:

gpio -v

Set up python modules:

sudo apt-get set up python3-pil

sudo apt-get set up python3-numpy

sudo pip3 set up RPi.GPIO

sudo pip3 set up spidev

Subsequent, clone the clear OLED Python library and set up the library:

sudo apt-get set up p7zip-full

sudo wget https://www.waveshare.com/w/

add/2/2c/OLED_Module_Code.7z

7z x OLED_Module_Code.7z -O./OLED_

Module_Code

cd OLED_Module_Code/RaspberryPi

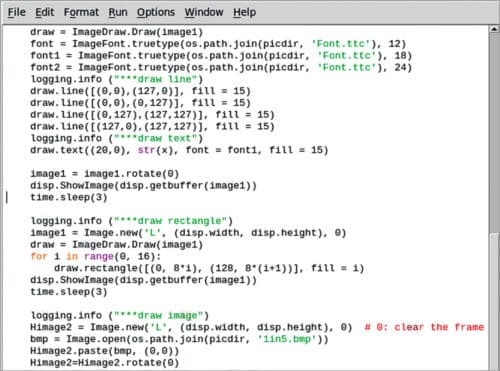

To start out coding, first import the Python modules and libraries. For the prototype, the DateTime library, OLED show modules and libraries had been imported. You may as well import many different libraries and modules, if you wish to add any extra features to the sensible eyeglasses.

Observe. You possibly can obtain the supply code from following hyperlink.

Development and testing

Now now we have our sensible glasses prepared for use. On powering the Raspberry Pi and operating the code, you’ll be able to see the knowledge being displayed on the clear OLED, like date and time, and different info that you’ve set within the code to be displayed. The data will seem as an overlay or a picture floating within the air, which you will have seen in some science fiction motion pictures.

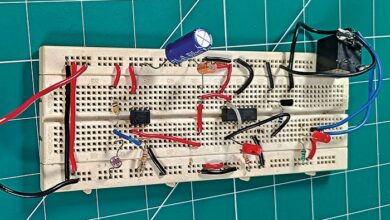

Writer’s ultimate prototype used for testing is proven in Fig. 6. That is the primary model of the mission. In subsequent model, we will attempt to add options like controlling the show utilizing eyes and getting notifications from the telephone.

Obtain supply code

Ashwini Kumar Sinha is a know-how fanatic at EFY